As I’m putting the (hopefully) final touches on a short textbook that I’m writing entitled “Handbook on Science Literacy”, I’ve been thinking a lot about how to recommend a person go about systematically investigating a scientific issue without having any background in it. Sure, you can learn how to read and understand a scientific article, but let’s be honest—far too many people choose instead to do a quick web search and let that settle the question. This practice works okay in some instances, but in others it produces misleading or wrong answers.

I want to share with you my strategies for flunking out of the University of Google.

This is one instance where flunking is a good thing. A graduate of the University of Google chooses to accept only information that supports his or her position, and ignores or dismisses information in conflict with it. A graduate of the University of Google will not be able to answer the question “What kind of evidence would change your mind on this subject?” It’s insidious, because once their opinions are formed in this way, they tend to identify with other people who share those opinions, and any new information that comes their way will either be accepted or rejected on the basis of which position they’ve already taken (the cultural cognition effect)

None of us want to be that kind of person.

Flunking out requires a decent amount of work, and the willingness to accept that you might be wrong about a subject from time to time. You’ll need to become more aware of your own cognitive biases, and have some strategies for overcoming them.

So as a preliminary step down the road to science literacy, I’ve put my thoughts on this together into a guide to learning about a subject in which you have no background. It’s an exercise; please don’t shortcut the process and go to Wikipedia, or you’ll miss the whole point.

1. Start by identifying a question that you want to find an accurate answer to, such as “Do vaccines cause autism?” or “Is genetically modified food harmful to human health?”, or “Do cell phones cause cancer?”. Try to keep this as specific as possible. Remember: one question can build upon another, but answer them one at a time.

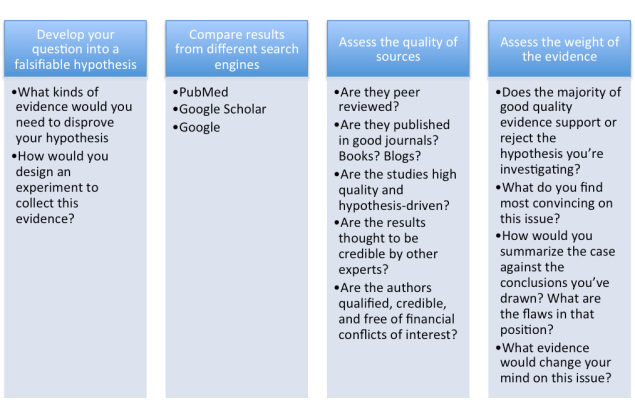

2. Now turn that question into a testable null hypothesis. In the examples above, your hypotheses could be:

- “Vaccines do not cause autism”

- “Genetically modified food is not harmful to human health.”

- “Cell phones do not cause cancer.”

Remember that in order to accurately answer your question, your hypothesis must be falsifiable! That is, you must be able to articulate what kind of evidence would disprove it. If you can’t, it might not be a scientific question, or you might have already made up your mind on the subject. Be honest with yourself: are you trying to find the truth on a subject, or just the kind of information you’re comfortable with?

3. Write down a few notes on what kinds of evidence would disprove your hypothesis. How would you design an experiment to collect this evidence?

4. Open up three separate browser windows:

http://www.ncbi.nlm.nih.gov/pubmed

5. Type key words from your hypothesis into the search bar of each page. Examine the first few pages of results from each search, taking notes summarizing what you found in each type of search. Note the type of publication and whether that publication was likely peer reviewed, as best as you can tell. Why peer review matters so much. Journals indexed on PubMed will be peer reviewed*. Books aren’t often peer reviewed, so I tend to assume they aren’t unless I know otherwise.

Here are notes on the first two results from my Google Scholar search just to give you an idea of how I do it:

Vaccines, autism, chronic inflammation: the new epidemic—book published by the National Vaccine Information Center (probably not peer reviewed)

Vaccines and autism: evidence does not support a causal association—journal article published in Clinical Pharmacology and Therapeutics (peer reviewed)

Once you have notes summarizing your results from all three types of search engines, answer the following questions:

- As best as you can tell from their titles, how many of your results support your hypothesis? How many reject your hypothesis?

- How do the results of your search differ between Google Scholar, PubMed, and regular Google? Why do you think that is?

6. Now choose a few of the references you found. How many you look at is up to you, and dependent on constraints such as time, which articles are open access, which books you can actually see online or in a library, etc. Just keep in mind that if you really want accurate information on a subject, the more references you look at, the better.

Be sure to look at both references that seem to support and references that seem to refute your hypothesis. You may need to look at the abstract of journal articles to determine this. Yes, here I said you shouldn’t start with an abstact when you’re reading a paper. But in order to find relevant papers, the abstract can be very helpful!

I highly recommend that you actually take the time to carefully read through the papers. But if you’re struggling, try to focus on extracting these pieces of information from the source:

- What are the authors trying to test, specifically?

- What is their takeaway message?

- What is the evidence they put forward to support their takeaway message?

- What is the quality of the research design? Does it test a hypothesis? Does it have a large number of subjects? For medical studies, what type of study is it? Use this excellent guide to help you assess the level of evidence in a medical study

Now look at the source itself. If it’s in a journal, Google the journal and see whether it’s credible. For example, contrast the journals Lancet and Medical Hypotheses. In which do you think you’ll find more accurate information?

Now look at the authors of the papers, books, or web articles you’ve found. Do they have the appropriate expertise in the subject you’re researching? What do you find when you Google them? Are they respected by other experts who are working on the same issue? What are some of the criticisms of them that you find? Do you find those criticisms credible or troubling? Do they have any financial conflicts of interest in the subject that they’re researching such as industry positions, or products for sale on their website that purport to “cure” whatever condition they’re publishing about?

Hopefully you can now see that when you’re doing a search for information, the quality of the results matters. You’re going to get more accurate information from PubMed (or equivalent search engines in relevant disciplines) than you will from Google. You’re going to get more trustworthy, evidence-based information from peer-reviewed papers than from random websites. You’re going to get better, more reliable information from good journals than from bad journals.

7. At this stage, revisit your hypothesis. Do you think the evidence supports or rejects it? Did the evidence you find fit with the answers you gave in question 3? What specifically do you find most convincing on this issue? Why is that?

8. How would you summarize the case against the conclusions you’ve drawn? What are the flaws in the arguments advanced by that position? What evidence do they lack?

9. Finally, but perhaps most importantly: What further evidence would change your mind on this issue?

I welcome your suggestions and feedback on this guide. If you’d like to read more strategies for becoming scientifically literate, my book will be out this fall. I’ll update this post with a link to it, as soon as it’s available.

*Note that PubMed doesn’t index all peer-reviewed journals from all disciplines. You may need to substitute a different search engine for this. (I use Google Scholar a lot).

Many thanks to Colin, Dorit, and Grant for their helpful feedback on this post.

Thank you. This is a really helpful set of guidelines on how to really learn by researching online.

Thanks, Dorit!

Brilliant. I’ll be using this exercise with my public health class–

Many thanks!

Thank you!

It’s a very useful idea to provide this sort of guide.

Here a few more tricks that I use.

(1) When googling, in addition to the word you are searching for, the words “criticism” or “quackery”. It is quite common for most of the first page of search results to come from people who are trying to sell you something, Including these words will bring up the other side of the story

(2) Ignore entirely information from people who are trying to get money from you. Also ignore the “personal testimonials” which they love to cite.

(3) Be aware that because something has been peer-reviewed doesn’t guarantee that the results are right, or even that they have been done well. Pubmed indexes something like 30 journals devoted to quackery. The papers are peer-reviewed by other quacks and they are rarely reliable or good.

(4) Don’t expect to be able to find the true answer to any question. There are huge areas where not enough is known to give a firm answer (but also be aware that some people are not very good at saying “I don’t know” when that is what they should say).

Excellent. Thank you David!!!!

. Finally, but perhaps most importantly: What further evidence would change your mind on this issue?

Transparency from both sides

Analysis of Raw data ,not interpretations of data by parties with no conflict of interest.

Acknowledge people concerns and address them with unbiased information not with smart remarks or insults.

When dismissing a source of information due to their discreted status, disclose the selected source of information criminal history. Ie. Drug manufacturers who are repeatedly charged with criminal fines for fraud, misbranding drugs to mislead the user, false safety statements, bribery of doctors, promoting drugs for unapproved uses, etc. Must also include an explanation to justify trusting any study funded by them given their very public history of data manipulation and fraudulent marketing.

Stop omitting details when discrediting other resources.

Stop calling demands for safer drugs anti-medicine.

Stop the talk down, patronizing and dismisal of parents with sick children whom none of you have treated or bother to know or physically look at his medical records. Medical errors due to lack of information about the patient includes drs. who refuse to listen to the parents report of their child condition. Doctors are service providers, they are not

Stop suppressing information just because you disagree. Give the people the opportunity to come to their own conclusions, then you can assist with any questions they have.

Stop telling people they are to stupid to understand science and that you simply know better.

Stop saying science is settled about autism, or any other brain disorder or side effects of vaccines when there are serious accusations of fraud about the study, fraud about its efficacy by parties that were involved in the study and the production of said vaccine. Specially when using said study as reference to reject anything that says otherwise.

Opposition towards properly investigating such allegations againts vaccines when their producers are known repetitive offenders because they can afford to pay fot their offense over and over and is the biggest red flag of biased, incorrect or incomplete sources of information.

You want people to leave the research for the professionally trained? You for instance? Be objective, be transparent, be unbiased, dont change the subject when unable to answer a question, dont shove your degree on peoples faces to justify your own opinions. A Dr. Can have degrees on 20 different specialties, but they are worthless if he doesn’t bother to learn everything single thing about their patients.

“Stop telling people they are to stupid to understand science and that you simply know better.”

“You want people to leave the research for the professionally trained? You for instance?”

I think you completely missed the point of my post. I don’t at all believe that people are too stupid to understand science. That’s why I’m trying to help people learn how to evaluate information.

I’m going to go out on a limb and interpret your comment as meaning that you believe the weight of the evidence shows that vaccines cause autism. You’ve indicated that you would like to see more transparency in disclosing funding sources, and more doctor-patient interactions. I agree those are good things.

But what kinds of data, specifically, would change your mind? If you were to design a study (that adhered to all standards for protection of human subjects (http://www.hhs.gov/ohrp/regulations-and-policy/belmont-report/), what would be your null hypothesis, and what evidence would refute your null hypothesis?

Great! One thing that you might want to consider adding is some info about understanding differences in evidence and where a certain study falls on the hierarchy of evidence. Like, looking at a single preliminary animal study versus looking at a meta-analysis of 10 different studies in humans.

I have just encountered a Google U fail. Someone was making the claim that deaths go down when doctors go on strike, and then posted this link:

http://www.qcc.cuny.edu/SocialSciences/ppecorino/MEDICAL_ETHICS_TEXT/Chapter_3_Moral_Climate_of_Health_Care/Reading-Death-Rate-Doctor-Strike.htm

What he did not realize was that it was reading material on how to evaluate stuff one reads on teh internets. The first part is the credulous “data” to supply the proof. The last part was a cut and past of the “Straight Dope” article that discusses the actual evidence. The class was taught by Prof. Philip A. Pecorino, who in the past hosted philosophy discussions for a Center for Inquiry group.

Seriously, read the entire page and figure out why it existed. I suspect that Prof. Pedorino was teaching a class very similar to what you are doing, Jennifer, but for medical issues.

Good post and (mostly) good comments. I would note however, that there are some limits to what is practical or even possible for untrained people to understand. And no, that isn’t due to stupidity, just lack of specialized training. As a biologist, I would have a tough time with papers on, say, brane theory, and few physicists would be able to make much sense of arguments in modern taxonomy. In my case, I don’t have the training in topology, and in the case of our hapless physicist, s/he wouldn’t have the necessary context.

This of course doesn’t mean that comprehension is impossible, just extraordinarily time consuming–I’d have to study group theory and topology to a very high level, our physicist would need a grounding in genetics, morphology, and cladistics.

It is also important for people to avoid hubris–gaining some solid understanding of science is an admirable goal, but limits exist. Ben Goldacre actually doesn’t recommend going to medical journals for most people for this reason–without the context resulting from long training, it is all too easy to come to believe that you know more than you actually do know. An earlier comment here provides a good illustration.

Hi! This is a really great guide. I’m going to use it as I continue to do medical/nutrition literature reviews. Quick question, though: What do you do when someone you know only wants to do things based on their personal experiences? And it starts to affect yours (as in, they generalize their experiences to be true for you as well) when they won’t consider the alternative hypotheses? I’m struggling with a scientifically-inclined family member on this one. They’ve recently experienced severe health crises that I think are psychologically getting in the way of being open-minded. I used to be able to talk science all the time but now… Doing that just creates arguments and judgements of lifestyle. Any ideas on how to encourage that inner scientist again?

Hello,

I am, like many other people who read this, probably going to borrow large chunks.

One thing I found useful when teaching first year business students was a diagram that looked like the classic risk management diagram (along one axis ‘chance of it happening’; along the other “severity if it does happen” and you have to take a lot of notice of events that are both likely and with severe consequences).

The two axes I have taught the students to use when assessing how useful an article is are, “likelihood that the findings are true and up to date” and “relevance of the information”: If an article is not both a) likely to be true and b) containing relevant information then discard it.

This is clearly subjective – one group I taught assessed the articles using colour coding from red to green for likelihood to be true – but it does make the process obvious.

Paul

Simply wonderful article. It is a really gentle explanation to intelligent people of what science is about.

I am a family doctor, trying to teach people how to research their illnesses.

I do not dare trespass where. you have gone! But the take away message I got of looking for the negative of their ideas is brilliant if they only dare do it.